Acquisition to strengthen engineering and electronics competenciesWill increase Magna’s forward lighting engineering capability in EuropeA complement to the 2018 acquisition of OLSA S.p.A.AURORA, Ontario, Nov. 20, 2019 (GLOBE NEWSWIRE) — Magna has agreed to acquire Wipac Czech s.r.o., a premium automotive lighting engineering firm located in Ostrava, Czech Republic. The transaction – Magna’s second lighting… Continue reading Magna to Acquire Wipac Czech s.r.o., Expand Lighting Capabilities

Category: Suppliers

Abrupt Stop Detection

skip to Main Content Human drivers confront and handle an incredible variety of situations and scenarios—terrain, roadway types, traffic conditions, weather conditions—for which autonomous vehicle technology needs to navigate both safely, and efficiently. These are edge cases, and they occur with surprising frequency. In order to achieve advanced levels of autonomy or breakthrough ADAS features, these edge cases must be addressed. In this series, we explore common, real-world scenarios that are difficult for today’s conventional perception solutions to handle reliably. We’ll then describe how AEye’s software definable iDAR™ (Intelligent Detection and Ranging) successfully perceives and responds to these challenges, improving overall safety.

Download AEye Edge Case: Abrupt Stop Detection [pdf]

Challenge: A Child Runs into the Street Chasing a BallA vehicle equipped with an advanced driver assistance system (ADAS) is cruising down a leafy residential street at 25 mph on a sunny day with a second vehicle following behind. Its driver is distracted by the radio. Suddenly, a small object enters the road laterally. At that moment, the vehicle’s perception system must make several assessments before the vehicle path controls can react. What is the object, and is it a threat? Is it a ball or something else? More importantly, is a child in pursuit? Each of these scenarios require a unique response. It’s imperative to brake or swerve for the child. However, engaging the vehicle’s brakes for a lone ball is unnecessary and even dangerous.

How Current Solutions Fall ShortAccording to a recent study done by AAA, today’s advanced driver assistance systems (ADAS) will experience great difficulty recognizing these threats or reacting appropriately. Depending on road conditions, their passive sensors may fail to detect the ball and won’t register a child until it’s too late. Alternatively, vehicles equipped with systems that are biased towards braking will constantly slam on the brakes for every soft target in the street, creating a nuisance or even causing accidents.

Camera. Camera performance depends on a combination of image quality, Field-of-View, and perception training. While all three are important, perception training is especially relevant here. Cameras are limited when it comes to interpreting unique environments because everything is just a light value. To understand any combination of pixels, AI is required. And AI can’t invent what it hasn’t seen. In order for the perception system to correctly identify a child chasing a ball, it must be trained on every possible permutation of this scenario, including balls of varying colors, materials, and sizes, as well as children of different sizes in various clothing. Moreover, the children would need to be trained in all possible variations—with some approaching the vehicle from behind a parked car, with just an arm protruding, etc. Street conditions would need to be accounted for, too, like those with and without shade, and sun glare at different angles. Perception training for every possible scenario may be possible. However, it’s an incredibly costly and time-consuming process.

Radar. Radar’s basic flaw is that it can only pick up a few degrees of angular resolution. When radar picks up an object, it will only provide a few detection points to the perception system to distinguish a general blob in the area. Moreover, an object’s size, shape, and material will influence its detectability. Radar can’t distinguish soft objects from other objects, so the signature of a rubber or leather ball would be close to nothing. While radar would detect the child, there would simply not be enough data or time for the system to detect, and then classify and react.

Camera + Radar. A system that combines radar with a camera would have difficulty assessing this situation quickly enough to respond correctly. Too many factors have the potential to negatively impact their performance. The perception system would need to be trained for the precise scenario to classify exactly what it was “seeing.” And the radar would need to detect the child early enough, at a wide angle, and possibly from behind parked vehicles (strong surrounding radar reflections), predict its path, and act. In addition, radar may not have sufficient resolution to distinguish between the child and the ball.

LiDAR. Conventional LiDAR’s greatest value in this scenario is that it brings automatic depth measurement for the ball and the child. It can determine within approximately a few centimeters exactly how far away each is in relation to the vehicle. However, today’s LiDAR systems are unable to ensure vehicle safety because they don’t gather important information—such as shape, velocity, and trajectory—fast enough. This is because conventional LiDAR systems are passive sensors that scan everything uniformly in a fixed pattern and assign every detection an equal priority. Therefore, it is unable to prioritize and track moving objects, like a child and a ball, over the background environment, like parked cars, the sky, and trees.

Successfully Resolving the Challenge with iDARAEye’s iDAR solves this challenge successfully because it can prioritize how it gathers information and thereby understand an object’s context. As soon as an object moves into the road, a single LiDAR detection will set the perception system into action. First, iDAR will cue the camera to learn about its shape and color. In addition, iDAR will define a dense Dynamic Region of Interest (ROI) on the ball. The LiDAR will then interrogate the object, scheduling a rapid series of shots to generate a dense pixel grid of the ROI. This dataset is rich enough to start applying perception algorithms for classification, which will inform and cue further interrogations.

Having classified the ball, the system’s intelligent sensors are trained with algorithms that instruct them to anticipate something in pursuit. At that point, the LiDAR will then schedule another rapid series of shots on the path behind the ball, generating another pixel grid to search for a child. iDAR has a unique ability to intelligently survey the environment, focus on objects, identify them, and make rapid decisions based on their context.

Software ComponentsComputer Vision. iDAR is designed with computer vision, creating a smarter, more focused LiDAR point cloud that mimics the way humans perceive the environment. In order to effectively “see” the ball and the child, iDAR combines the camera’s 2D pixels with the LiDAR’s 3D voxels to create Dynamic Vixels. This combination helps the AI refine the LiDAR point clouds around the ball and the child, effectively eliminating all the irrelevant points and leaving only their edges.

Cueing. A single LiDAR’s detection on the ball sets the first cue into motion. Immediately, the sensor flags the region where the ball appears, cueing the LiDAR to focus a Dynamic ROI on the ball. Cueing generates a dataset that is rich enough to apply perception algorithms for classification. If the camera lacks data (due to light conditions, etc.), the LiDAR will cue itself to increase the point density around the ROI. This enables it to gather enough data to classify an object and determine whether it’s relevant.

Feedback Loops. Once the ball is detected, a feedback loop is generated by an algorithm that triggers the sensors to focus another ROI immediately behind the ball and to the side of the road to capture anything in pursuit, initiating faster and more accurate classification. This starts another cue. With that data, the system can classify whatever is behind the ball and determine its true velocity so that it can decide whether to apply the brakes or swerve to avoid a collision.

The Value of AEye’s iDARLiDAR sensors embedded with AI for intelligent perception are vastly different than those that passively collect data. After detecting and classifying the ball, iDAR will immediately foveate in the direction where the child will most likely enter the frame. This ability to intelligently understand the context of a scene enables iDAR to detect the child quickly, calculate the child’s speed of approach, and apply the brakes or swerve to avoid collision. To speed reaction times, each sensor’s data is processed intelligently at the edge of the network. Only the most salient data is then sent to the domain controller for advanced analysis and path planning, ensuring optimal safety.

Abrupt Stop Detection —A Pedestrian in HeadlightsSmarter Cars Podcast Talks LiDAR and Perception Systems with AEye President, Blair LaCorteFalse PositiveCargo Protruding from VehicleAEye Team Profile: Jim RobnettAEye Team Profile: Aravind RatnamAEye Expands Business Development and Customer Success Team to Support Growing Network of Global Partners and CustomersiDAR Sees Only What MattersAutonomous Cars with Marc Hoag Talks “Biomomicry” with AEye President, Blair LaCorteprevious post: Previousnext post: Next ← Obstacle Avoidance ← False PositiveAbout Management Team Advisory Board InvestorsiDAR Agile LiDAR Dynamic Vixels AI & Software Definability iDAR in ActionProducts AE110 AE200 iDAR Select Partner ProgramNews Press Releases AEye in the News Events AwardsLibrary Technology News & Views Profiles Videos BlogCareersSupportContact Back To Top

Bus passes just became part of your employee benefits

“Commuting as benefits” is becoming increasingly popular as employers discover the advantages of having workers show up to work on time, rested and less stressed. For some, commuting to work is a quick trip by car or subway. But for most it’s long, tedious and involves multiple modes of transport. The worst part is, we… Continue reading Bus passes just became part of your employee benefits

Media Explores Where 3D Automotive Lidar is Headed with Velodyne CTO Anand Gopalan

November 19, 2019 Real-time 3D lidar is poised to be the third leg of the trifecta of sensor technologies enabling both advanced driver-assistance (ADAS) and autonomous vehicles, writes Ed Brown in a Photonics & Imaging Technology story. Brown spoke with Velodyne Lidar CTO Anand Gopalan about the current state of 3D automotive lidar and where… Continue reading Media Explores Where 3D Automotive Lidar is Headed with Velodyne CTO Anand Gopalan

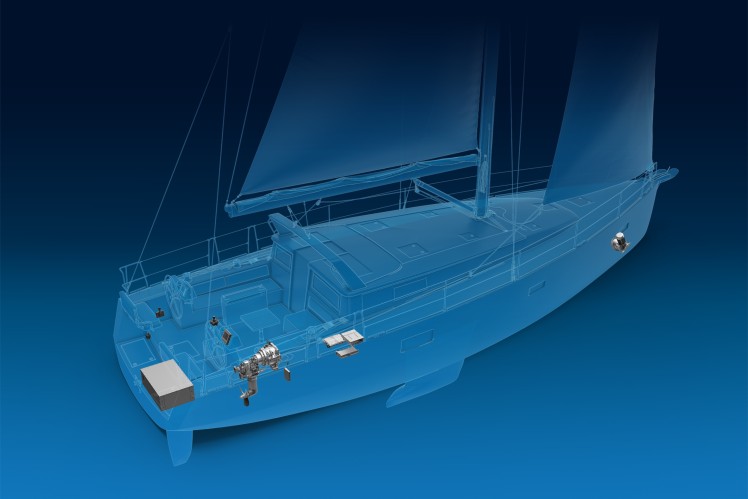

Press: E-volution for Sea Vessels: ZF Develops Fully Electric Propulsion System for Sailing Yachts

Zero noise and zero emissions: full electric propulsion system makes sailing more environmentally friendly New technology boosts ZF’s leadership position in sustainable marine propulsion systems E-drive proves its worth in the innovation ship since September 2019 Friedrichshafen. ZF is continuing to expand its portfolio of environment-friendly marine propulsion solutions. The new fully electric propulsion system… Continue reading Press: E-volution for Sea Vessels: ZF Develops Fully Electric Propulsion System for Sailing Yachts

Press: ZF and Wolong Electric Plan Joint Venture for Production of Electric Motors and Components

“The partnership with Wolong, a key player of electric motors and components in the Chinese market, is a great next step in further strengthening our electric mobility strategy. With the joint venture we extend our value chain for electric motors to include sub-components and it gives us even better access to customers and suppliers in… Continue reading Press: ZF and Wolong Electric Plan Joint Venture for Production of Electric Motors and Components

NVIDIA Takes RTX, AI and CloudXR to Autodesk University

From architectural design to virtual productions, NVIDIA RTX is taking visualizations to the next level — and you can see it all at Autodesk University, which runs Nov. 18-21 in Las Vegas. Over 11,000 professionals from the engineering, construction and manufacturing industries will get a glimpse of how RTX accelerates design and visualization applications. A… Continue reading NVIDIA Takes RTX, AI and CloudXR to Autodesk University

Record 136 NVIDIA GPU-Accelerated Supercomputers Feature in TOP500 Ranking

The new wave of supercomputers is largely GPU accelerated, the latest TOP500 list of the world’s fastest systems shows. Of the 102 new supercomputers to join the closely watched ranking, 42 use NVIDIA GPU accelerators — including AiMOS, the most powerful addition to the list, which was released this week. Coming in at No. 24,… Continue reading Record 136 NVIDIA GPU-Accelerated Supercomputers Feature in TOP500 Ranking

False Positive

skip to Main Content Human drivers confront and handle an incredible variety of situations and scenarios—terrain, roadway types, traffic conditions, weather conditions—for which autonomous vehicle technology needs to navigate both safely, and efficiently. These are edge cases, and they occur with surprising frequency. In order to achieve advanced levels of autonomy or breakthrough ADAS features, these edge cases must be addressed. In this series, we explore common, real-world scenarios that are difficult for today’s conventional perception solutions to handle reliably. We’ll then describe how AEye’s software definable iDAR™ (Intelligent Detection and Ranging) successfully perceives and responds to these challenges, improving overall safety.

Download AEye Edge Case: False Positive [pdf]

Challenge: A Balloon Floating Across The RoadA vehicle equipped with an advanced driver assistance system (ADAS) is traveling down a residential block on a sunny afternoon when the air is relatively still. A balloon from a child’s birthday party comes floating across the road. It drifts down and ends up suspended almost motionless in the lane ahead. If the driver of an ADAS vehicle isn’t paying attention, this is a dangerous situation. Its perception system must make a series of quick assessments to avoid causing an accident. Not only must it detect the object in front of it, it must also classify it to determine whether it’s a threat. The vehicle’s domain controller can then decide that the balloon is not a threat and drive through it.

How Current Solutions Fall ShortToday’s advanced driver assistance systems (ADAS) will experience great difficulty detecting the balloon or classifying it fast enough to react in the safest way possible. Typically, ADAS vehicle sensors are trained to avoid activating the brakes for every anomaly on the road because it is assumed that a human driver is paying attention. As a result, in many cases, they will allow the car to drive into them. In contrast, level 4 or 5 self-driving vehicles are biased toward avoiding collisions. In this scenario, they’ll either undertake evasive maneuvers or slam on the brakes, creating an unnecessary incident or causing an accident.

Camera. It is extremely difficult for a camera to distinguish between soft and hard objects; everything is just pixels. In this case, perception training is practically impossible because in the real world, soft objects can appear in an almost infinite variety of shapes, forms, and colors—possibly even taking on human-like shapes in poor lighting conditions. Camera detection performance is completely dependent on proper training of all possible permutations of a soft target’s appearance in combination with the right conditions. Sun glare, shade, or night time operation will negatively impact performance.

Radar. An object’s material is of vital significance to radar. A soft object containing no metal or having no reflectivity is unable to reflect radio waves, so radar will miss the balloon altogether. Additionally, radar is typically trained to disregard stationary objects because otherwise it would be detecting thousands of objects as the vehicle advances through the environment. So, even if the balloon is made from reflective metallic plastic, because it’s floating in the air, there might not be enough movement for the radar to detect it. Therefore, radar will provide little, if any, value in correctly classifying the balloon and assessing it as a potential threat.

Camera + Radar. Together, camera and radar would be unable to assess the scenario and react correctly every time. The camera would try to detect the balloon. However, there would be many scenarios where the camera will identify it incorrectly or not at all depending on lighting and perception training. The camera will frequently be confused—it might identify the balloon as a pedestrian or something else for which the vehicle needs to brake. And radar will be unable to eliminate the camera confusion because it typically won’t detect the balloon at all.

LiDAR. Unlike radar and camera, LiDAR is much more resilient to lighting conditions, or an object’s material. LiDAR would be able to precisely determine the balloon’s 3D position in space to centimeter-level accuracy. However, conventional low density scanning LiDAR falls short when it comes to providing sufficient data fast enough for classification and path planning. Typically, LiDAR detection algorithms require many laser points on an object over several frames to register as a valid object. A low density LiDAR that passively scans the surroundings horizontally can experience challenges achieving the required number of detects when it comes to soft, shape-shifting objects like balloons.

Successfully Resolving the Challenge with iDARIn this scenario, iDAR excels because it can gather sufficient data at the sensor level for classifying the balloon and determining its distance, shape, and velocity before any data is sent to the domain controller. This is possible because as soon as there’s a single LiDAR detection of the balloon, iDAR will immediately flag it with a Dynamic Region of Interest (ROI). At that point, the LiDAR will generate a dense pattern of laser pulses in the area, interrogating the balloon for additional information. All this takes place while iDAR also continues to track the background environment to ensure it never misses new objects.

Software Components and Data TypesComputer Vision. iDAR is designed with computer vision that creates a smarter, more focused LiDAR point cloud. In order to effectively “see” the balloon, iDAR combines the camera’s 2D pixels with the LiDAR’s 3D voxels to create Dynamic Vixels. This combination helps iDAR refine the LiDAR point cloud on the balloon, effectively eliminating all the irrelevant points.

Cueing. For safety purposes, it’s essential to classify soft targets at range because their identities determine the vehicle’s specific and immediate response. To generate a dataset that is rich enough to apply perception algorithms for classification, as soon as LiDAR detects an object, it will cue the camera for deeper information about its color, size, and shape. The perception system will then review the pixels, running algorithms to define the object’s possible identities. To gain additional insights, the camera cues the LiDAR for additional data, which allocates more shots.

Feedback Loops. Intelligent iDAR sensors are capable of cueing each other for additional data, and they are also capable of cueing themselves. If the camera lacks data (due to light conditions, etc.), the LiDAR will generate a feedback loop that tells the sensor to “paint” the balloon with a dense pattern of laser pulses. This enables the LiDAR to gather enough data about the target’s size, speed, and direction to effectively aid the perception system in classifying the object without the benefit of camera data.

The Value of AEye’s iDARLiDAR sensors embedded with AI for intelligent perception are very different than those that passively collect data. When iDAR registers a single detection of a soft target in the road, it’s priority is classification. To avoid false positives, iDAR will schedule a series of LiDAR shots in that area to determine that it’s a balloon, or something else like a cement bag, tumbleweed, or a pedestrian. iDAR can flexibly adjust point cloud density on and around objects of interest and then use classification algorithms at the edge of the network. This ensures only the most important data is sent to the domain controller for optimal path planning.

False Positive —AEye Named to Forbes AI 50AEye Team Profile: Indu VijayanAEye Team Profile: Jim RobnettAEye Advisory Board Profile: Adrian KaehlerAEye Team Profile: Aravind RatnamAEye Team Profile: Dr. Allan SteinhardtAEye Team Profile: Vivek ThotlaCargo Protruding from VehicleFlatbed Trailer Across Roadwayprevious post: Previousnext post: Next ← Abrupt Stop Detection ← Cargo Protruding from VehicleAboutManagement TeamAdvisory BoardInvestorsiDAR Agile LiDAR Dynamic Vixels AI & Software Definability iDAR in ActionProducts AE110 AE200 iDAR Select Partner ProgramNewsPress ReleasesAEye in the NewsEventsAwardsLibraryTechnologyNews & ViewsProfilesVideosBlogCareersSupportContact Back To Top

Cargo Protruding from Vehicle

skip to Main ContentHuman drivers confront and handle an incredible variety of situations and scenarios—terrain, roadway types, traffic conditions, weather conditions—for which autonomous vehicle technology needs to navigate both safely, and efficiently. These are edge cases, and they occur with surprising frequency. In order to achieve advanced levels of autonomy or breakthrough ADAS features, these edge cases must be addressed. In this series, we explore common, real-world scenarios that are difficult for today’s conventional perception solutions to handle reliably. We’ll then describe how AEye’s software definable iDAR™ (Intelligent Detection and Ranging) successfully perceives and responds to these challenges, improving overall safety.

Download AEye Edge Case: Cargo Protruding From Vehicle [pdf]

Challenge: Cargo Protruding from VehicleA vehicle equipped with an advanced driver assistance system (ADAS) is driving down a road at 20 mph. Directly ahead, a large pick-up truck stops abruptly. Its bed is filled with lumber, much of which is jutting out the back and into the lane. If the driver of an ADAS vehicle isn’t paying attention, this is a potentially fatal scenario. As the distance between the two vehicles quickly shrinks, the ADAS vehicle’s domain controller must make a series of critical assessments to identify the object and avoid a collision. However, this is dependant on its perception system’s ability to detect the lumber. Numerous factors can negatively impact whether or not a detection takes place, including adverse lighting, weather, and road conditions.

How Current Solutions Fall ShortToday’s advanced driver assistance systems (ADAS) will experience great difficulty recognizing this threat or reacting appropriately. Depending on their sensor configuration and perception training, many will fail to register the cargo before it’s too late.

Camera. In scenarios where depth perception is important, cameras run into challenges. By their nature, camera images are two dimensional. To an untrained camera, cargo sticking out of a truck bed will look like small, elongated rectangles floating above the roadway. In order to interpret this 2D image in 3D, the perception system must be trained—something that is difficult to do given the innumerable permutations of cargo shapes. The scenario becomes even more challenging depending on time of day. In the afternoon, sunlight reflecting off the truck bed or directly into the camera can create blind spots, obscuring the cargo. At night, there may not be enough dynamic range in the camera image for the perception system to successfully analyze the scene. If the vehicle’s headlights are in low beam mode, most of the light will pass underneath the lumber.

Radar. Radar detection is quite limited in scenarios where objects are small and stationary. Typically, radar perception systems disregard stationary objects because otherwise, there would be too many objects for the radar to track. In a scenario featuring narrow, non-reflective objects that are surrounded by reflections from the metal truck bed and parked cars, the radar would have great difficulty detecting the lumber at all.

Camera + Radar. Due to the above explained deficiencies, in most cases, a system that combines radar with a camera would be unable to detect the lumber or react quickly. The perception system would need to be trained on an almost infinite variety of small stationary objects associated with all manner of vehicles in all possible light conditions. For radar, many objects are simply less capable of reflecting radio waves. As a result, radar will likely miss or disregard small, non-reflective stationary objects. In addition, radar would be incapable of compensating for the camera’s lack of depth perception.

LiDAR. Conventional LiDAR doesn’t struggle with depth perception. And its performance isn’t significantly impacted by light conditions, nor by an object’s material and reflectivity. However, conventional LiDAR systems are limited because their scan patterns are fixed, as are their Field-of-View, sampling density, and laser shot schedule. In this scenario, as the LiDAR passively scans the environment, its laser points will only hit the small ends of the lumber a few times. Typically, LiDAR perception systems require a minimum of five detections to register an object. Today’s 4-, 16-, and 32-channel systems would likely not collect enough detections early enough to determine that the object was present and a threat.

Successfully Resolving the Challenge with iDARAccurately measuring distance is crucial to solving this challenge. A single LiDAR detection will cause iDAR to immediately flag the cargo as a potential threat. At that point, a quick series of LiDAR shots will be scheduled directly targeting the cargo and the area around it. Dynamically changing both LiDAR’s temporal and spatial sampling density, iDAR can comprehensively interrogate the cargo to gain critical information, such as its position in space and distance ahead. Only the most useful and actionable data is sent to the domain controller for planning the safest response.

Software ComponentsComputer Vision. iDAR combines 2D camera pixels with 3D LiDAR voxels to create Dynamic Vixels. This data type helps the system’s AI refine the LiDAR point cloud on and around the cargo, effectively eliminating all the irrelevant points and creating information from discrete data.

Cueing. As soon as iDAR registers a single detection of the cargo, the sensor flags the region where cargo appears and cues the camera for deeper real-time analysis about its color, shape, etc. If light conditions are favorable, the camera’s AI reviews the pixels to see if there are distinct differences in that region. If there are, it will send detailed data back to the LiDAR. This will cue the LiDAR to focus a Dynamic Region of Interest (ROI) on the cargo. If the camera lacks data, the LiDAR will cue itself to increase the point density on and around the detected object creating an ROI.

Feedback Loops. A feedback loop is triggered when an algorithm needs additional data from sensors. In this scenario, a feedback loop will be triggered between the camera and the LiDAR. The camera can cue the LiDAR, and the LiDAR can cue additional interrogation points, or a Dynamic Region of Interest, to determine the cargo’s location, size, and true velocity. Once enough data has been gathered, it will be sent to the domain controller so that it can decide whether to apply the brakes or swerve to avoid a collision.

The Value of AEye’s iDARLiDAR sensors embedded with AI for intelligent perception are very different than those that passively collect data. As soon as the perception system registers a single valid LiDAR detection of an object extending into the road, iDAR responds intelligently. The LiDAR instantly modifies its scan pattern, increasing laser shots to cover the cargo in a dense pattern of laser pulses. Camera data is used to refine this information. Once the cargo has been classified, and its position in space and distance ahead determined, the domain controller can understand that the cargo poses a threat. At that point, it plans the safest response.

Cargo Protruding from Vehicle —AEye Team Profile: Jim RobnettSAE's Autonomous Vehicle Engineering on New LiDAR Performance MetricsAEye Team Profile: Aravind RatnamAEye Team Profile: Amy IshiguroAEye’s New AE110 iDAR System Integrated into HELLA Vehicle at IAA in FrankfurtAbrupt Stop DetectionLeading Global Automotive Supplier Aisin Invests in AEye through Pegasus Tech VenturesAEye Team Profile: Vivek ThotlaRethinking the Three “Rs” of LiDAR: Rate, Resolution and Rangeprevious post: Previous ← False PositiveAboutManagement TeamAdvisory BoardInvestorsiDAR Agile LiDAR Dynamic Vixels AI & Software Definability iDAR in ActionProducts AE110 AE200 iDAR Select Partner ProgramNewsPress ReleasesAEye in the NewsEventsAwardsLibraryTechnologyNews & ViewsProfilesVideosBlogCareersSupportContact Back To Top